When Side Effects Trend Before Regulators React

In 2023, a mid-sized pharmaceutical company detected a potential adverse drug reaction signal not through clinical reporting systems, but through Twitter posts. Patients were describing unusual neurological symptoms weeks before formal reports reached regulators. The company’s AI system flagged the pattern early, allowing internal investigation before the issue escalated into a public safety crisis. That moment captured a shift that many in pharmacovigilance had predicted for years: the first signal no longer comes from hospitals. It comes from social media.

If you work in healthcare, pharma, biotech, or regulatory affairs, you already know that traditional adverse event reporting systems move slowly. Patients delay reporting. Doctors underreport. Regulatory databases take months to reflect trends. Meanwhile, millions of patients describe their symptoms online in real time. The question is no longer whether social media contains drug safety data. The question is whether you are listening fast enough.

AI-driven adverse event detection from social media is no longer experimental. It is becoming a competitive advantage, a regulatory expectation, and in some cases, a legal necessity.

The Scale Problem That Forced AI Into Pharmacovigilance

Traditional pharmacovigilance relies on systems such as FAERS in the United States, EudraVigilance in Europe, and VigiBase managed by the WHO. These systems remain essential, but they suffer from a well-documented problem: underreporting.

Research published in drug safety journals consistently estimates that only 6 to 10 percent of adverse drug reactions ever get reported through official channels. That means 90 percent of safety signals may remain invisible in the early stages.

Now compare that with social media volume:

- Twitter users post roughly 500 million tweets per day

- Reddit has more than 70 million daily users discussing health conditions and medications

- Health forums and patient communities generate millions of drug-related posts every month

- Online reviews for medications often contain side effect descriptions more detailed than clinical reports

This creates a paradox. The most valuable real-world drug safety data exists in the least structured environment possible. Human teams cannot manually monitor this volume. AI can.

How AI Detects Adverse Events From Social Media

AI-driven pharmacovigilance systems use natural language processing and machine learning to scan social media posts for potential adverse events. The process involves multiple layers of analysis, and each layer solves a different problem.

First, the system identifies whether a post actually mentions a drug and a medical event. People rarely use clinical language online. They say “this medicine made my heart race” instead of “tachycardia.” AI models trained on medical ontologies translate informal language into standardized medical terminology such as MedDRA.

Second, the system determines whether the post describes a real adverse event or just general discussion. For example:

- “This drug causes headaches” is general information

- “I started this drug and now I have headaches every day” is a potential adverse event

Third, the system extracts key pharmacovigilance elements:

- Drug name

- Adverse event

- Patient demographics if mentioned

- Timing relationship between drug intake and event

- Outcome

Fourth, signal detection algorithms identify patterns. One post means nothing. Hundreds of similar posts within a short time period create a safety signal.

Modern systems use deep learning models such as BERT-based medical language models, transformer architectures, and large language models trained on biomedical text. These systems can detect sarcasm, slang, spelling mistakes, and multilingual posts, which older systems could not process.

Real-World Examples That Changed Industry Thinking

Several real-world cases pushed regulators and pharma companies to take social media monitoring seriously.

One widely cited case involved the diabetes drug Avandia. Years before regulatory action intensified, patients were already discussing cardiovascular issues in online forums. The signal existed in public discussion long before it became a regulatory crisis.

Another example involved isotretinoin and psychiatric side effects. Online patient communities documented mood changes and depression symptoms extensively. AI retrospective studies later showed that social media signals appeared earlier than formal reporting data.

During the COVID-19 vaccine rollout, AI systems monitored social media for adverse events such as myocarditis, blood clotting concerns, and allergic reactions. Health authorities used social listening dashboards to identify misinformation trends and real safety concerns separately.

What does this tell you? Social media does not replace clinical data, but it acts as an early warning system.

Regulatory Authorities Are Already Watching

If you think regulators ignore social media data, you are behind the curve.

The FDA, EMA, and MHRA have all published guidance acknowledging the role of social media in pharmacovigilance. Regulatory agencies expect pharmaceutical companies to monitor digital platforms for adverse event reporting.

Key regulatory expectations include:

- Companies must identify adverse events reported on company-managed social media pages

- Companies should have systems to capture and report valid adverse events found online

- AI tools can be used but must be validated

- Data privacy and patient consent rules still apply

The European Medicines Agency has funded multiple research programs on social media signal detection. The FDA has run pilot programs using AI to monitor online health discussions.

If you work in pharma, this creates a new compliance requirement. If a patient reports a side effect on your company’s social media page and you fail to capture it, regulators may treat that as a reporting failure.

The Technology Stack Behind AI Pharmacovigilance

To understand where this field is heading, you need to understand the technology stack that powers it. Most AI-driven adverse event detection systems include:

- Data ingestion systems that collect data from Twitter, Reddit, forums, blogs, and patient communities

- Natural language processing models trained on medical terminology

- Named entity recognition to identify drugs and adverse events

- Sentiment and intent analysis to determine whether the post describes an actual event

- Signal detection algorithms such as disproportionality analysis

- Dashboards for pharmacovigilance teams

- Automated case intake systems that convert posts into safety cases

Major technology providers in this space include Oracle, IQVIA, SAS, and several AI pharmacovigilance startups. Many large pharmaceutical companies now run internal AI signal detection teams.

The Accuracy Problem and Why It Is Improving

Critics often raise a valid concern: social media data is messy, unreliable, and full of noise. That criticism was accurate ten years ago. It is less accurate now.

Early AI systems struggled with false positives. For example:

- “This exam gave me a headache” could be misclassified as a drug adverse event

- “This drug is killing me” could be sarcasm

- Misspelled drug names created detection failures

Modern AI systems improved accuracy using:

- Context-aware language models

- Medical knowledge graphs

- Human-in-the-loop validation

- Continuous model training using real pharmacovigilance cases

Recent studies show that AI systems can now identify valid adverse event cases from social media with accuracy levels between 70 percent and 90 percent depending on the dataset and drug class. That is not perfect, but pharmacovigilance has never required perfect data. It requires early signals.

Why This Matters for Drug Launch Strategy

This is where the business impact becomes clear. AI-driven adverse event detection is not just a safety tool. It is a competitive intelligence tool.

When a new drug launches, companies monitor:

- Patient sentiment

- Side effect discussions

- Off-label use discussions

- Comparison with competitor drugs

- Adherence issues

- Quality of life feedback

If you detect a side effect trend early, you can:

- Update safety labeling faster

- Inform physicians

- Adjust marketing messaging

- Prevent reputational damage

- Avoid large-scale product withdrawals

In several cases, companies that detected signals early avoided billion-dollar losses associated with late safety actions.

Ethical and Privacy Questions You Cannot Ignore

Monitoring social media for health data raises ethical questions. Patients do not always realize that their public posts may be used for pharmacovigilance. Regulations such as GDPR in Europe impose strict rules on personal data usage.

Companies must address:

- Data anonymization

- Consent requirements

- Secure data storage

- Responsible AI use

- Transparency policies

If companies ignore these issues, social media pharmacovigilance could trigger privacy backlash.

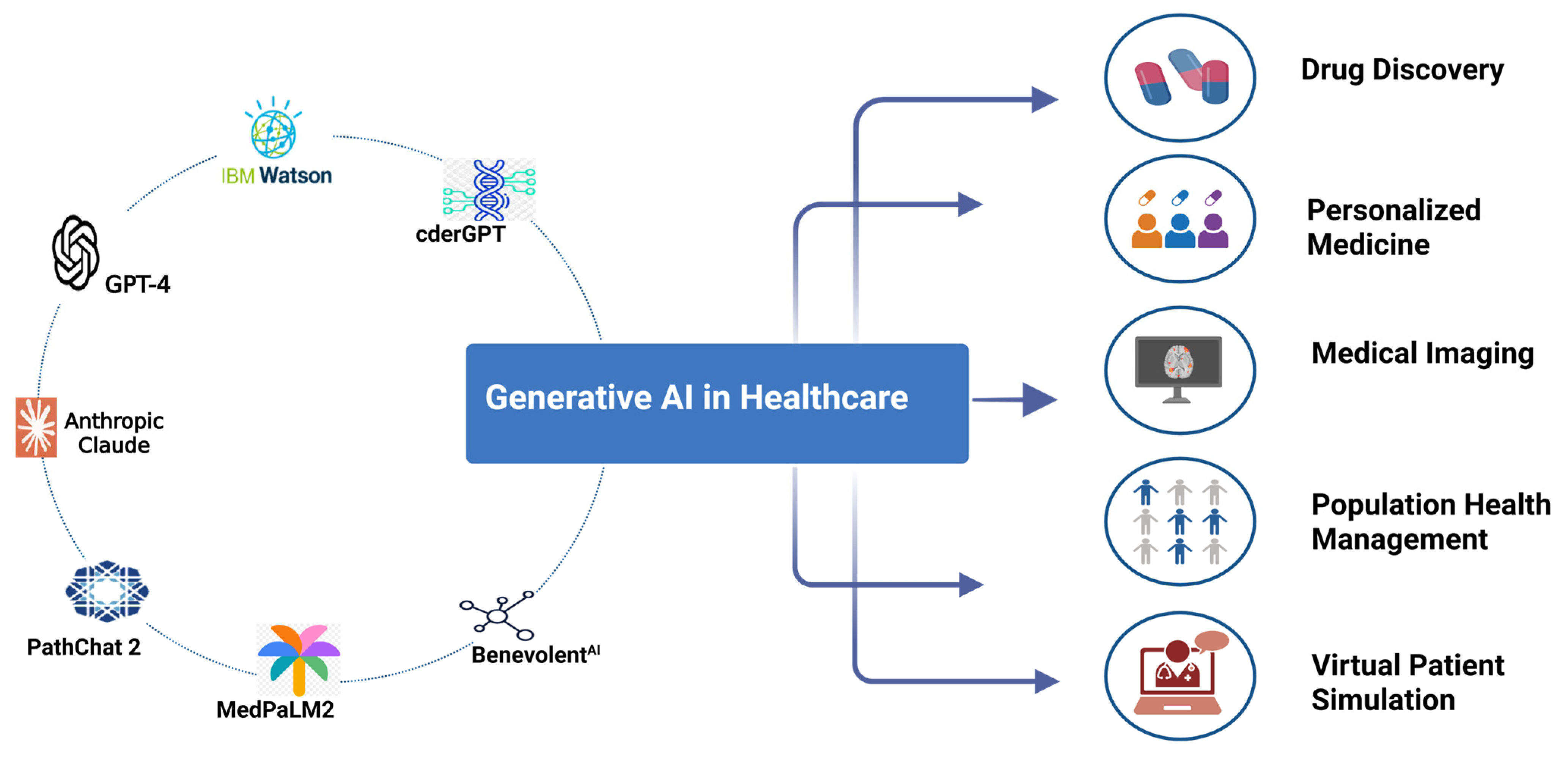

The Future: Generative AI and Real-Time Drug Safety Surveillance

Generative AI is now being layered on top of signal detection systems. This changes how pharmacovigilance teams work.

Instead of analysts reading thousands of posts, AI can now:

- Summarize emerging safety trends

- Generate safety signal reports

- Prioritize high-risk cases

- Translate posts from multiple languages

- Draft regulatory reports

- Identify patient subgroups experiencing specific side effects

This moves pharmacovigilance from reactive reporting to real-time surveillance.

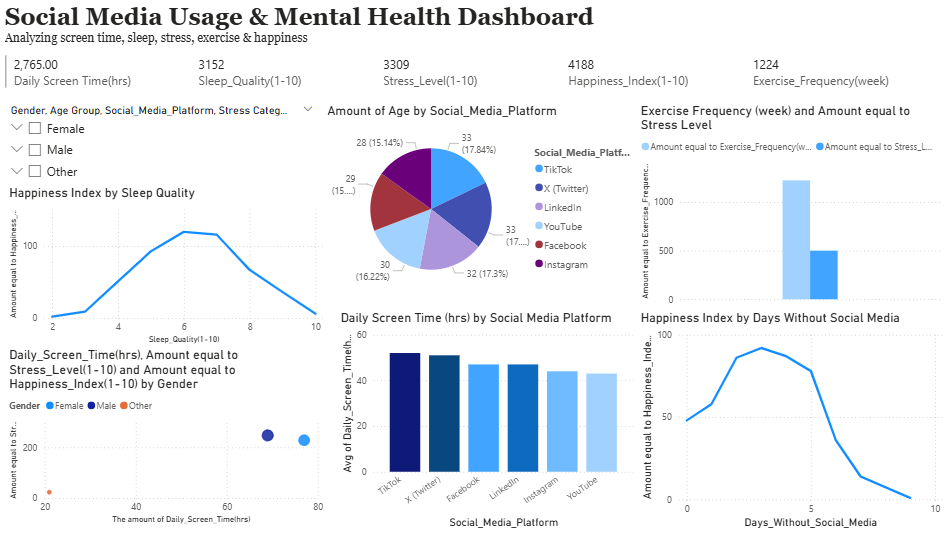

Imagine a dashboard where you can see:

- A spike in insomnia reports linked to a new antidepressant

- Geographic clusters of side effects

- Demographic patterns such as age or gender risk differences

- Comparison with clinical trial data

That system already exists in some large pharmaceutical companies.

The Strategic Question You Should Be Asking

If you work in pharma, biotech, health tech, or regulatory strategy, ask yourself a direct question: If a serious adverse event signal about your product starts trending on social media tonight, would you detect it before journalists do?

Because that is the real risk.

Drug safety crises no longer begin with regulatory letters. They begin with viral posts, Reddit threads, and patient advocacy groups. By the time traditional reporting systems detect the issue, public perception may already be set.

AI-driven adverse event detection from social media is not about replacing pharmacovigilance systems. It is about closing the time gap between patient experience and safety action.

And in drug safety, time is the difference between signal detection and public crisis.

References

FDA Guidance on Postmarketing Adverse Event Reporting for Social Media

https://www.fda.gov/regulatory-information/search-fda-guidance-documents/postmarketing-adverse-event-reporting-social-media

European Medicines Agency – Social Media and Pharmacovigilance Report

https://www.ema.europa.eu/en/documents/report/social-media-pharmacovigilance_en.pdf

WHO VigiBase Pharmacovigilance Database Overview

https://www.who-umc.org/vigibase/vigibase/

IQVIA Report – Artificial Intelligence in Pharmacovigilance

https://www.iqvia.com/insights/the-iqvia-institute/reports/artificial-intelligence-in-pharmacovigilance

Nature Digital Medicine – Detecting Adverse Drug Reactions from Social Media

https://www.nature.com/articles/s41746-019-0181-1

Journal of Medical Internet Research – Social Media Pharmacovigilance

https://www.jmir.org/2020/11/e21382/