Pharmaceutical companies are adopting generative AI faster than regulators can write guidance. That gap is where the biggest risk sits. Many pharma teams now use AI to generate marketing copy, summarize clinical data, create patient education material, draft email campaigns, and even produce training content for sales representatives. The productivity gains are real. The compliance risks are also real, and they are not theoretical.

If an AI system generates a claim that goes beyond the approved label, that content becomes a regulatory risk. If an AI model produces biased patient education content, that becomes an ethical risk. If AI-generated promotional content omits safety information, that becomes a legal risk. The speed of AI content generation means mistakes can scale faster than traditional review systems can catch them.

This is why ethical guardrails for AI in pharmaceutical promotion are not optional. They are becoming a core part of compliance, governance, and commercial strategy.

AI Is Already Inside Pharmaceutical Promotion

Before discussing ethics, you need to understand where AI is already being used in pharmaceutical promotion.

Pharma companies are using AI for:

- Marketing copy generation

- HCP email campaigns

- Patient education content

- Chatbots for patient support

- Sales training material

- Market research summaries

- Competitive intelligence

- Social media content drafts

- Translation and localization

- Content personalization

- Search engine marketing content

- Website content generation

This means AI is already influencing what doctors read, what patients learn, and how drugs are presented in digital environments.

So the real question is not whether AI should be used in pharmaceutical promotion. The real question is how to control it.

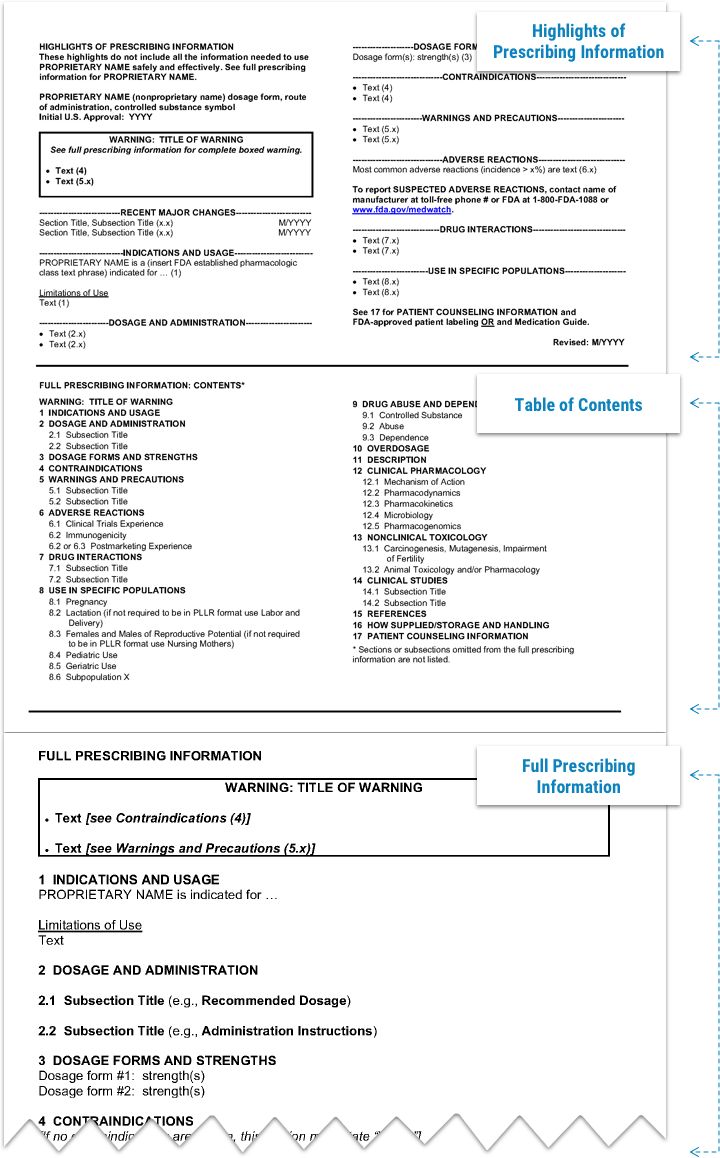

The Regulatory Reality: Promotion Is Already Highly Regulated

Pharmaceutical promotion is one of the most regulated forms of marketing in any industry. In the United States, the FDA Office of Prescription Drug Promotion regulates promotional claims. In Europe, the European Medicines Agency and national regulators oversee promotion. Companies must ensure that promotional content:

- Is truthful and not misleading

- Presents fair balance between benefits and risks

- Stays consistent with approved labeling

- Does not promote off-label use

- Uses appropriate evidence

- Includes safety information

These rules were written for human-generated content. AI-generated content introduces new risks because AI can produce content that sounds scientifically accurate but includes subtle inaccuracies, unsupported claims, or missing safety information.

If AI generates promotional content, the company remains legally responsible for that content. The regulator does not care whether a human or an AI wrote the sentence.

The Biggest Ethical Risk: AI Can Scale Mistakes

One of the biggest ethical risks in AI-driven pharmaceutical promotion is scale. A human copywriter may write one incorrect claim in one document. An AI system can generate hundreds of pieces of content in minutes. If the prompt is incorrect or the model produces inaccurate information, the error can spread across multiple channels quickly.

This creates a new type of compliance risk:

- Automated content generation

- Automated personalization

- Automated email campaigns

- Automated chatbot responses

- Automated social media content

If these systems are not controlled, companies may distribute non-compliant content at scale.

This is why AI governance must include content review workflows, approval systems, and audit trails.

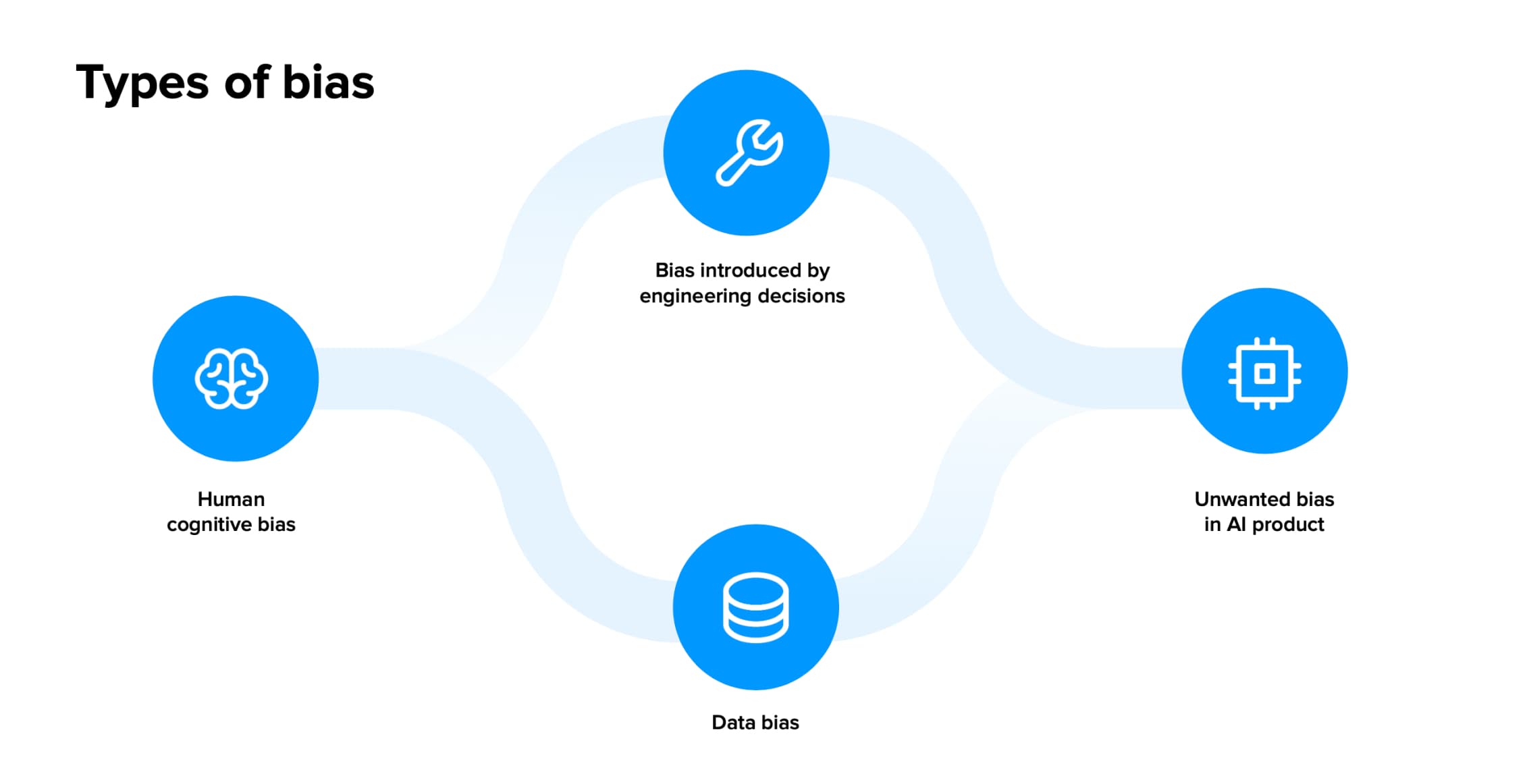

The Ethical Issue of Bias in AI-Generated Health Content

AI systems learn from historical data. If historical healthcare data contains bias, AI systems may reproduce that bias.

Examples of potential bias include:

- Underrepresentation of certain ethnic groups in clinical data

- Gender differences in symptom reporting

- Socioeconomic differences in treatment access

- Geographic differences in healthcare access

- Language bias in patient education content

If AI-generated patient education content does not reflect diverse populations, companies may unintentionally create unequal access to information.

Ethical AI governance must include bias monitoring, dataset review, and human oversight.

Transparency: Should Patients and Doctors Know AI Wrote the Content?

Another ethical question is transparency. If AI generates patient education material, should patients know? If AI generates physician marketing content, should doctors know?

Some companies are beginning to disclose AI-assisted content creation, especially for educational material. Transparency builds trust, but it also raises operational questions about how and when to disclose AI involvement.

This issue will likely become more important as regulators develop AI-specific guidance for pharmaceutical promotion.

Human Oversight Is Not Optional

One of the biggest misconceptions about AI in pharma marketing is that AI will replace human review. In reality, AI increases the need for human oversight because AI can generate content faster than humans can verify it.

Most companies adopting AI in promotion are implementing human-in-the-loop systems where:

- AI generates first draft content

- Medical team reviews clinical accuracy

- Legal team reviews compliance

- Regulatory team reviews label consistency

- Marketing team finalizes messaging

AI speeds up content creation, but humans remain responsible for accuracy and compliance.

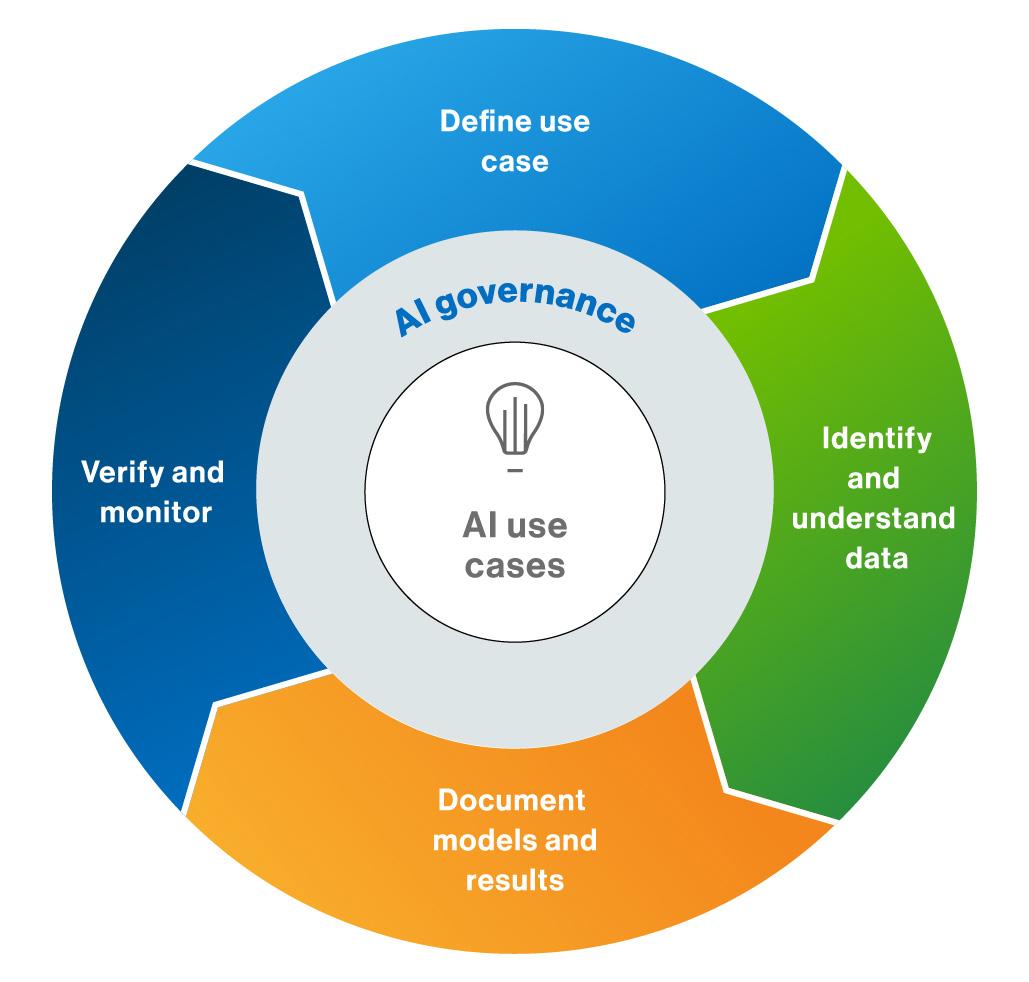

Building Ethical Guardrails: What Companies Should Actually Do

Ethical guardrails for AI in pharmaceutical promotion must be practical, not theoretical. Companies that are successfully implementing AI governance are focusing on several key areas.

Key guardrails include:

- Approved prompt libraries

- Label-based content generation systems

- Mandatory medical review for AI-generated content

- Audit trails for AI-generated content

- AI output monitoring

- Bias testing

- Data privacy controls

- Access control systems

- AI usage policies

- Employee training on AI compliance

- Vendor risk assessment for AI tools

- Documentation of AI use in content creation

These guardrails turn AI from a compliance risk into a controlled productivity tool.

The Role of Medical, Legal, and Regulatory Teams Is Expanding

AI is changing the role of Medical, Legal, and Regulatory review teams. Instead of reviewing only final promotional materials, these teams now help design:

- Approved prompts

- Approved data sources

- AI usage policies

- Content generation workflows

- Risk classification systems

- AI content review processes

MLR teams are moving upstream into the content creation process.

Real-World Example: AI Chatbots in Patient Support

Several pharmaceutical companies have deployed AI chatbots to answer patient questions about diseases, treatment access, and support programs. These chatbots must be carefully controlled to avoid:

- Providing medical advice

- Discussing off-label uses

- Providing incorrect dosing information

- Giving incomplete safety information

Companies manage this risk by:

- Restricting chatbot responses to approved content libraries

- Including disclaimers and escalation to human agents

- Monitoring chatbot conversations

- Logging interactions for compliance review

This is an example of ethical guardrails in action.

The Legal Risk: Companies Are Responsible for AI Output

From a regulatory perspective, AI-generated content is treated the same as human-generated content. If an AI system produces misleading promotional content, regulators hold the company responsible.

This creates legal risk in areas such as:

- Off-label promotion

- Misleading claims

- Incomplete risk disclosure

- Unbalanced benefit-risk presentation

- Data privacy violations

- Misuse of patient data for targeting

Companies must treat AI as a regulated content creation tool, not just a productivity tool.

The Future of Ethical AI in Pharmaceutical Promotion

Regulators are beginning to develop frameworks for AI in healthcare and pharmaceutical promotion. Future regulations will likely focus on:

- Transparency in AI-generated content

- Validation of AI models

- Auditability of AI decisions

- Bias monitoring

- Data privacy protection

- Human oversight requirements

- Risk classification of AI use cases

Companies that build ethical AI governance now will be better prepared for future regulation.

The Strategic Question Pharmaceutical Companies Must Ask

The key strategic question is not whether AI should be used in pharmaceutical promotion. AI will be used because the productivity gains are too significant to ignore.

The real question is whether companies can use AI faster than competitors while maintaining compliance, accuracy, and trust.

Ethical guardrails are not barriers to AI adoption. They are what make safe adoption possible.

Pharmaceutical promotion operates in a highly regulated environment because the stakes are high. Promotional content influences treatment decisions, and treatment decisions affect patient health. When AI enters this system, the responsibility does not decrease. It increases.

If your company uses AI in pharmaceutical promotion, you are not just managing marketing risk. You are managing regulatory risk, legal risk, reputational risk, and patient trust.

Ethical guardrails are not just about compliance. They are about maintaining trust in an industry where trust determines whether patients, doctors, and regulators believe what you say.

References

FDA Office of Prescription Drug Promotion Guidance

https://www.fda.gov/drugs/prescription-drug-advertising

European Medicines Agency Digital and AI Guidance

https://www.ema.europa.eu

World Health Organization Guidance on Ethics and AI for Health

https://www.who.int/publications/i/item/9789240029200

OECD Principles on Artificial Intelligence

https://oecd.ai/en/ai-principles

McKinsey Report – Generative AI in Life Sciences

https://www.mckinsey.com/industries/life-sciences

Deloitte – Trustworthy AI in Healthcare

https://www2.deloitte.com/global/en/pages/risk/articles/trustworthy-ai.html